|

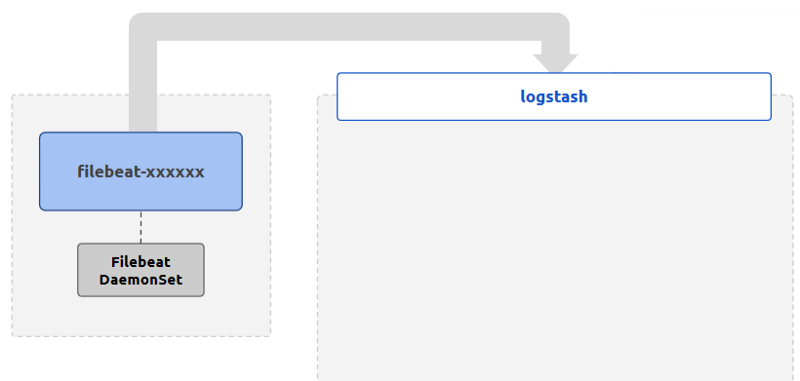

filebeat setup -e Step 7 – Start the filebeat daemon $ sudo chown root filebeat. filebeat test config -e Step 6 – Setup Assets Filebeat comes with predefined assets for parsing, indexing, and visualizing your data. By installing Filebeat as an agent on your servers, you’re able to collect log events and forward them to either Elasticsearch or Logstash for indexing. Password: "" Step 5 – To test your configuration file $. Well, Filebeat is a lightweight shipper for forwarding and centralizing log data and files.

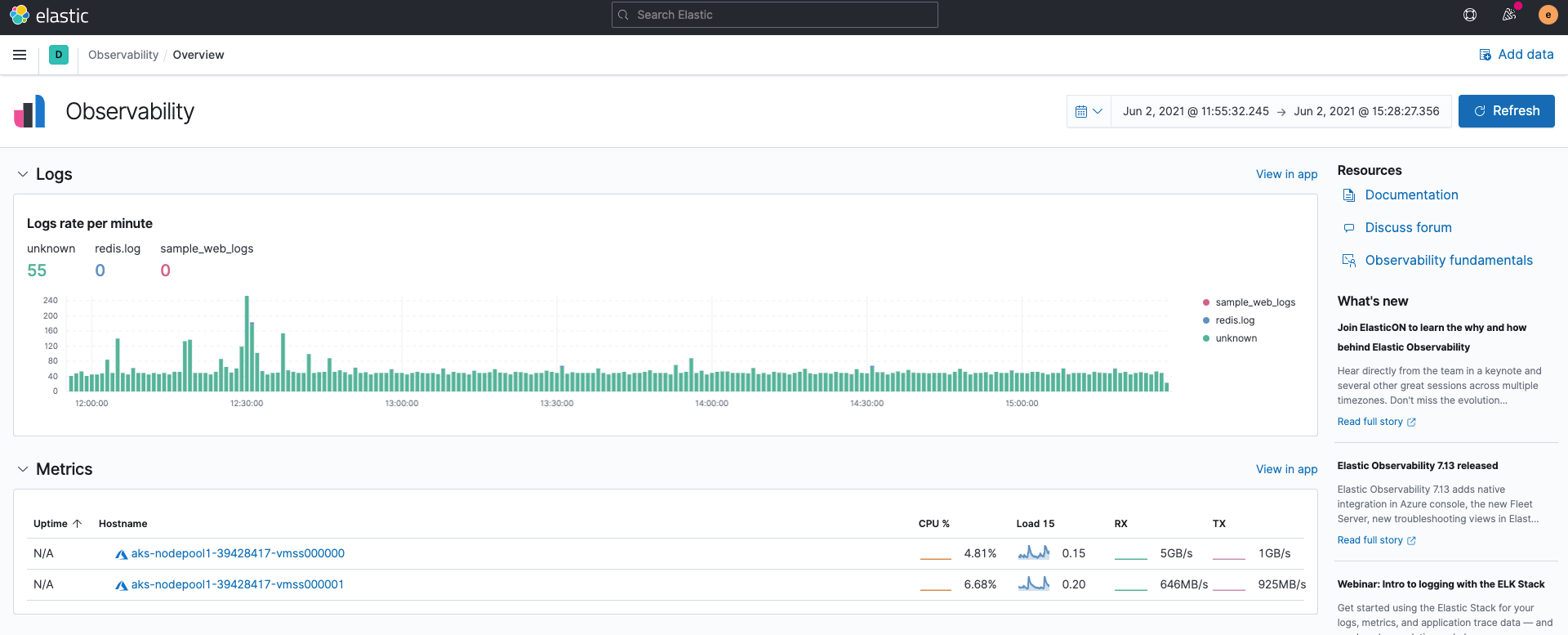

#- c:\programdata\elasticsearch\logs\* Step 3 – Configure output in filebeat.yml output.elasticsearch:Ĭa_trusted_fingerprint: "069dd4ec9161d86b6299a2823c1f66c5c7a1afd47550c8521bb07e6e0c4cf329" Step 4 – Configure Kibana in filebeat.yml setup.kibana: Right now we are unable to access GUI ('Invalid username or password. # Paths that should be crawled and fetched. Hi, recently we upgraded Wazuh's component from 4.4.0-1 to 4.4.3-1. # Change to true to enable this input configuration. # Unique ID among all inputs, an ID is required. # filestream is an input for collecting log messages from files. If you already have an ELK Stack already running, then the better. # Below are the input specific configurations. To make it easier for you to check the status of your cluster on one platform, we are going to deploy Elasticsearch and Kibana on an external server then ship logs from your cluster to Elasticsearch using Elastic’s beats ( Filebeat, Metricbeat etc ). This section explains how to log to a Docker installation of the Elastic Stack (Elasticsearch, Logstash and Kibana), using filebeat to send log contents to.

# you can use different inputs for various configurations. Most options can be set at the input level, so $ cd filebeat-8.3.3-linux-x86_64 Step 2 – Configure input in filebeat.yml # Each - is an input. Step 1 – Download a file beat pacage $ cd /opt

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed